Incremental learning

Incremental learning for semantic segmentation is the ability of a learning system (e.g., a neural network) to learn the segmentation and the labeling of the new classes without forgetting or deteriorating too much the performance on previously learned ones.

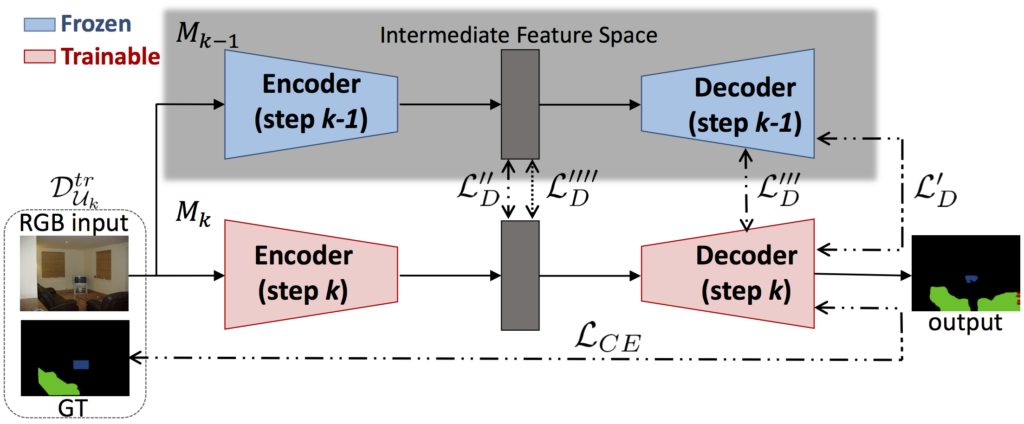

- In [1] the incremental learning problem for semantic segmentation is formally introduced. To tackle this task we propose to distill the knowledge of the previous model to retain the information about previously learned classes, whilst updating the current model to learn the new ones. We propose various approaches working both on the output logits and on intermediate features. In opposition to some recent frameworks, we do not store any image from previously learned classes and only the last model is needed to preserve high accuracy on these classes.

- The approach presented in [2] moves from our previous work [1], which is the first investigation on incremental learning for semantic segmentation. Compared to [1], the main contributions of this version are the following: (1) The distillation scheme on the output layer is improved and now considers also the uncertainty of the estimations of previous model. (2) A distillation constraint for the intermediate layers inspired from the Similarity-Preserving Knowledge Distillation (SPKD) is introduced. (3) A novel distillation scheme is proposed to enforce the similarity of multiple decoding stages simultaneously. (4) A new strategy consisting in freezing only the first layers of the encoder is introduced to preserve unaltered the most task-agnostic part of the feature extraction. (5) Extensive experiments are conducted on many different scenarios. The results are reported on Pascal VOC2012 but also on the MSRC-v2 dataset to validate the generalization properties of the proposed methods.

- In [3] we propose a continual learning scheme that shapes the latent space to reduce forgetting whilst improving the recognition of novel classes. Our framework, called SDR, is driven by three novel components which we also combine on top of existing techniques effortlessly. First, prototypes matching enforces latent space consistency on old classes, constraining the encoder to produce similar latent representation for previously seen classes in the subsequent steps. Second, features sparsification allows to make room in the latent space to accommodate novel classes. Finally, contrastive learning is employed to cluster features according to their semantics while tearing apart those of different classes. Extensive evaluation on the Pascal VOC2012 and ADE20K datasets demonstrates the effectiveness of our approach, significantly outperforming state-of-the-art methods.

Coarse-to-fine learning

In coarse-to-fine learning previous knowledge, acquired on a different but related task, is transferred to a new situation. We tackled two coarse-to-fine refinements in semantic segmentation: respectively, at the spatial level and at the semantic level.

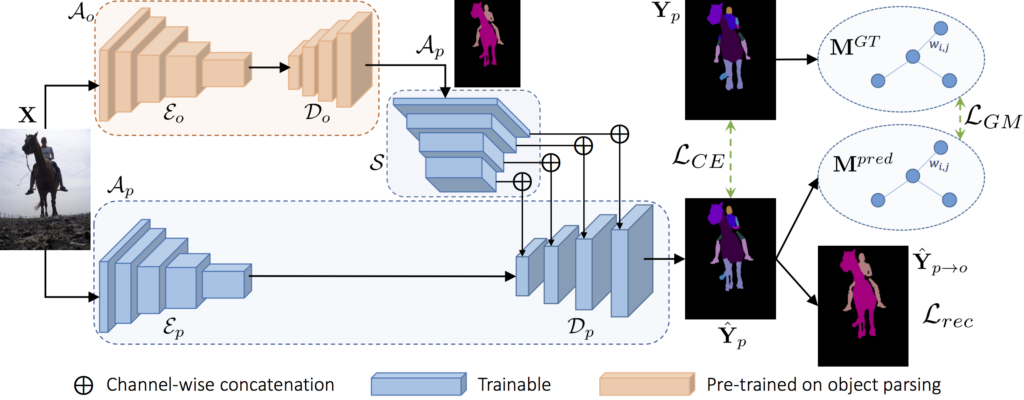

- The coarse-to-fine refinement at the spatial level is the decomposition of object-level classes into their respective parts. In [5], we investigated the multi-object and multi-part parsing in the wild, which simultaneously handles all semantic objects and parts within each object in the scene. Even strong recent baselines for semantic segmentation face additional challenges when dealing with this task. In particular, the simultaneous appearance of multiple objects and the inter-class ambiguity may cause inaccurate boundary localization and severe classification errors. For instance, animals often have homogeneous appearance due to furs on the whole body. Additionally, the appearance of some parts over multiple object classes may be very similar, such as cow legs and sheep legs. Current algorithms heavily suffer from these aspects. To address object-level ambiguity, we propose an object-level conditioning to serve as guidance for part parsing within the object. An auxiliary reconstruction module from parts to objects further penalize part-level predictions in regions occupied by an object which does not contain the predicted parts within it. At the same time, to tackle part-level ambiguity, we introduce a graph-matching module to preserve the relative spatial relationships between ground truth and predicted parts. Experimental results show that we were able to achieve state of the art results on this task outperforming concurrent approaches.

- In the coarse-to-fine refinement at the semantic level, previous knowledge acquired on a coarser task is exploited to perform a finer task. For instance, in [5] we addressed the multi-level semantic segmentation task where a deep neural network is first trained to recognize an initial, coarse, set of a few classes. Then, in an incremental-like approach, it is adapted to segment and label new objects categories hierarchically derived from subdividing the classes of the initial set. For example, a generic coarse class furniture was divided into finer classes such as desk, dresser or cabinet. We propose a set of strategies where the output of the coarse classifier is fed to the architectures performing the finer classification. Furthermore, we investigate the possibility to predict the different levels of semantic understanding together exploiting shared multi-task representation, which also helps to achieve higher accuracy.

Related papers

- U. Michieli and P. Zanuttigh, Incremental Learning Techniques for Semantic Segmentation, Proceedings of the International Conference on Computer Vision (ICCV), Workshop on Transferring and Adapting Source Knowledge in Computer Vision (TASK-CV), 2019.

- U. Michieli and P. Zanuttigh, Knowledge Distillation for Incremental Learning in Semantic Segmentation, Computer Vision and Image Understanding (CVIU), Elsevier 2021.

- U. Michieli and P. Zanuttigh, Continual Semantic Segmentation via Repulsion-Attraction of Sparse and Disentangled Latent Representations, International Conference on Computer Vision and Pattern Recognition (CVPR), 2021.